Fully Connected Prototype and Function List¶

Description¶

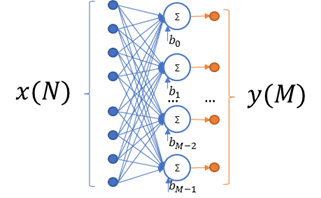

This kernel implements a fully connected layer, also usually referred to as the inner product or dense layer.

Each value of output tensor is calculated according to the following formula:

Where:

\(x_{j}\) - \(j_{\text{th}}\) value in input tensor

\(y_{i}\) - output of \(i_{\text{th}}\) neuron (\(i_{\text{th}}\) value in output tensor)

\(W_{i,j}\) - weight of \(j_{\text{th}}\ \)input element for \(i_{\text{th}}\) neuron.

\(b_{i}\) - bias for \(i_{\text{th}}\) neuron

Optionally, a saturating ReLU activation function can be applied to the result of the calculations during the function’s execution. For more information on supported ReLU types, see ReLU Prototype and Function List.

This is a MAC-based kernel which implies accumulation. See Quantization: Influence of Accumulator Bit Depth for more information on related quantization aspects. The Number of accumulation series is equal to input size.

Functions¶

Functions that implement fully connected kernels have the following prototype:

mli_status mli_krn_fully_connected_<data_format>(

const mli_tensor *in,

const mli_tensor *weights,

const mli_tensor *bias,

const mli_fully_connected_cfg *cfg,

mli_tensor *out);

where data_format is one of the data formats listed in Table MLI Data Formats

and the function parameters are shown in the following table:

Parameter |

Type |

Description |

|---|---|---|

|

|

[IN] Pointer to constant input tensor. |

|

|

[IN] Pointer to constant weights tensor. |

|

|

[IN] Pointer to constant bias tensor. |

|

|

[IN] Pointer to fully connected parameters structure. |

|

|

[IN | OUT] Pointer to output tensor. Result is stored here. |

mli_fully_connected_cfgis defined as:

typedef struct {

mli_relu_cfg relu;

} mli_fully_connected cfg;

Field Name |

Type |

Description |

|---|---|---|

|

|

Type of ReLU activation applied to output values. See ReLU Prototype and Function List for definition of this structure |

Here is a list of all available Fully Connected functions:

Function Name |

Details |

|---|---|

|

In/out/weights data format: sa8 Bias data format: sa32 |

|

All tensors data format: fx16 |

|

In/out data format: fx16 Weights/Bias data format: fx8 |

|

In/out/weights data format: sa8 Bias data format: sa32 Bias data adjusted to include zero point additives |

mli_krn_fully_connected_sa8_sa8_sa32_ext_bias is a specialized version of

mli_krn_fully_connected_sa8_sa8_sa32 which performs calculations much faster, but requires bias

data to be adjusted according to the following formula:

Where:

\(in\_zp\) - zero point of input sa8 tensor

\(W_{i,j}\) - weight of \(j_{\text{th}}\ \)input element for \(i_{\text{th}}\) neuron.

\(b_{i}\) - original sa32 bias for \(i_{\text{th}}\) neuron

\(\hat{b}_{i}\) - adjusted sa32 bias for \(i_{\text{th}}\) neuron

Conditions¶

Ensure that you satisfy the following general conditions before calling the function:

in,out,weightsandbiastensors must be valid (see mli_tensor Structure Field Descriptions) and satisfy data requirements of the selected version of the kernel.Shapes of

in,out,weightsandbiastensors must be compatible, which implies the following requirements:

intensor might be of any shape and rank. Only total number of elements is considered.

weightsis a 2-dimensional tensor (rank==2) of shape \((N, M)\), where \(N\) is the total number of elements in the input tensor and \(M\) is the total number of neurons and is equal to output length.

biasmust be a one-dimensional tensor (rank==1). Its length must be equal to \(M\) dimension (number of filters and is equal to output length) of weights tensor.

outmust be a one-dimensional tensor (rank==1). Its length must be equal to \(M\) dimension (number of filters) of weights tensor.

inandouttensors must not point to overlapped memory regions.

mem_stridemust satisfy the following statements:

For

inandouttensors - memstride must reflect the shape, e.g memory of these tensors must be contiguous.For

weightsandbiastensor - memstride of the innermost dimension must be equal to 1.

For fx16 and fx16_fx8_fx8 versions of kernel, in addition to the general conditions, ensure that you satisfy the following quantization conditions before calling the function:

The number of

frac_bitsin thebiasandouttensors must not exceed the sum offrac_bitsin theinandweightstensors.

For sa8_sa8_sa32 versions of kernel, in addition to the general conditions, ensure that you satisfy the following quantization conditions before calling the function:

inandouttensors must be quantized on the tensor level. It implies that each tensor contains a single scale factor and a single zero offset.Zero offset of

inandouttensors must be within [-128, 127] range.

weightsandbiastensors must be symmetric. Both must be quantized at the same level. Allowed options are

Per Tensor level. This implies that each tensor contains a single scale factor and a single zero offset equal to 0.

Per \(M\) dimension level (number of neurons). This implies that each tensor contains separate scale point for each sub-tensor. All tensors contain single zero offset equal to 0.

Scale factors of bias tensor must be equal to the multiplication of input scale factor broadcasted on weights array of scale factors. See the example for the similar condition in the Convolution 2D Prototype and Function List.

Ensure that you satisfy the platform-specific conditions in addition to those listed above (see the Platform Specific Details chapter).

Result¶

These functions only modify the memory pointed by out.data.mem field.

It is assumed that all the other fields of out tensor are properly populated

to be used in calculations and are not modified by the kernel.

Depending on the debug level (see section Error Codes) this function performs a parameter

check and returns the result as an mli_status code as described in section Kernel Specific Configuration Structures.